- What Are Shopping Bots?

- How Shopping Bots Work (From Request to Checkout or Data Extraction)

- Common Use Cases for Shopping Bots

- Challenges of Running Shopping Bots in the Real World

- Choosing the Right Proxy Setup for Shopping Bots

- Best Practices for Running Shopping Bots at Scale

- Frequently Asked Questions

Shopping bots have become a core tool for automating how businesses interact with e-commerce web data – from price monitoring to tracking inventory and even executing purchases in real time. In this article, we’ll break down how shopping bots work, explore their most common use cases, and look at the challenges of running them at scale, along with the infrastructure and web scraping best practices needed to keep them reliable.

What Are Shopping Bots?

Shopping bots are automated programs designed to interact with e-commerce websites in place of a human user. Depending on their purpose, they can browse shop pages, extract structured product data, monitor changes in pricing or availability, or even complete purchases automatically. While the term often gets associated with high-demand product drops, the underlying concept is much broader – and far more relevant to everyday business operations than it might seem at first glance.

How Shopping Bots Work (From Request to Checkout or Data Extraction)

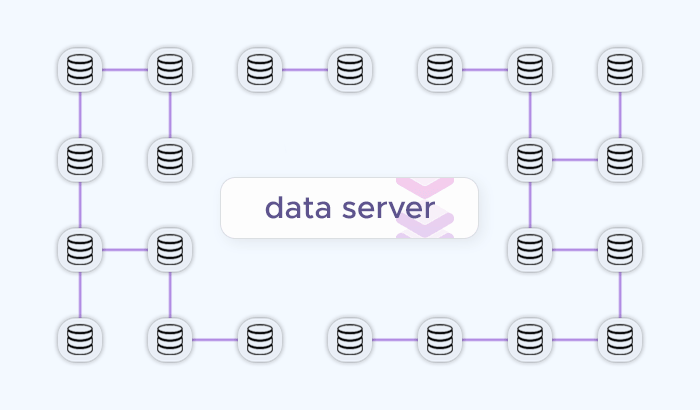

Behind the scenes, shopping bots follow a fairly consistent web scraping workflow: they request data from a target website, interpret the response, and either extract information or take an action. While the exact implementation varies – from lightweight scripts to headless browser automation – the underlying sequence remains the same.

Targeting Products and Endpoints

Every shopping bot starts by identifying what it needs to interact with. This could be a product page, a category listing, a search result, or even an internal API endpoint used by the website. In simpler setups, bots send direct HTTP requests to these URLs and retrieve raw HTML or JSON data. More advanced systems map how a site loads content dynamically, replicating the exact requests a browser would make in the background.

This stage often determines efficiency. Well-targeted requests reduce unnecessary load and speed up web scraping, while poorly structured targeting can lead to redundant traffic and quicker detection.

Sending Requests and Simulating User Behavior

Once targets are defined, the bot begins sending requests. At a small scale, this might look like sequential queries from a single IP address. At a larger scale, however, repeating the same request pattern quickly triggers CAPTCHAs. Websites monitor request frequency, headers, and behavioral patterns to distinguish bots from real users.

To remain effective, shopping bots typically vary how requests are sent. This can include rotating IP addresses, adjusting headers, or simulating realistic browsing behavior. For bots that rely on headless browsers, this step may involve rendering pages, using residential proxies, executing JavaScript, and mimicking user interactions like scrolling or clicking.

Parsing Data or Triggering Actions

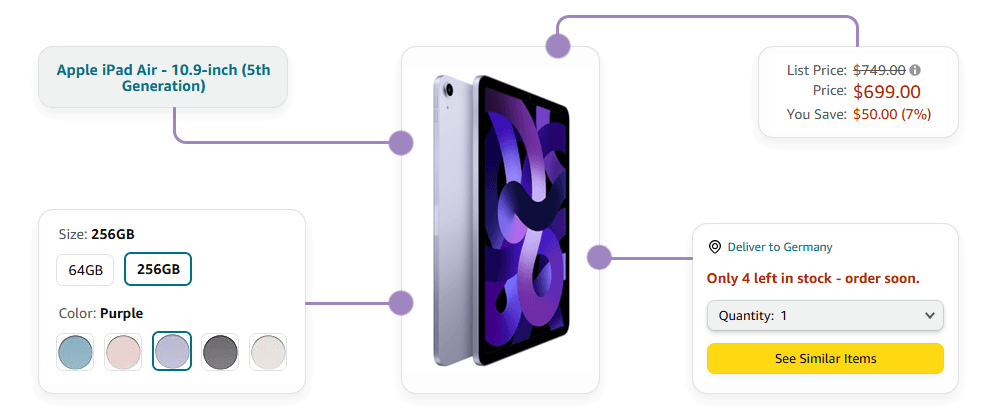

After receiving a response, the bot processes the content. For web scraping use cases, this means extracting structured information such as product names, prices, availability, or seller details. The extracted web data is then normalized and stored for further analysis.

For purchase automation, the process is more interactive. Instead of just reading data, the bot follows a sequence of actions – adding items to a cart, submitting forms, and completing checkout flows. This requires handling tokens, cookies, and session data correctly, since even small inconsistencies can break data extraction.

Managing Sessions and State

As bots move beyond one-off requests, they need to maintain state across multiple interactions. This is especially important for login flows, carts, or multi-step navigation. Session management uses cookies or tokens that persist between requests, allowing the bot to appear as a continuous user rather than a series of disconnected visits.

Maintaining session integrity becomes more challenging at scale. If requests originate from inconsistent locations or environments, sessions may be invalidated or flagged as suspicious, interrupting the workflow.

Common Use Cases for Shopping Bots

Shopping bots are often associated with hype-driven product drops, but in practice, their most valuable applications are far less visible. Across industries, they power continuous web scraping, real-time monitoring, and automated decision-making – all built on the ability to reliably interact with e-commerce platforms at scale.

Price Monitoring

One of the most common use cases is competitor price tracking. Shopping bots continuously collect product pricing, discounts, and promotional data, feeding it into analytics systems that help teams adjust their own pricing strategies.

This becomes especially important in markets where prices fluctuate frequently or differ by location. A single product might appear at different price points depending on the user’s country, device, or browsing context. Bots make it possible to capture this variation consistently, rather than relying on manual price monitoring that quickly become outdated.

Inventory and Availability Tracking

For businesses that depend on timely information, knowing when a product goes in or out of stock can be just as important as its price. Shopping bots monitor product pages and notify systems the moment availability changes, enabling faster reactions to restocks, shortages, or sudden demand spikes.

This use case is particularly relevant for high-turnover industries such as electronics, retail, or travel-related inventory, where delays in detecting changes can translate directly into missed opportunities.

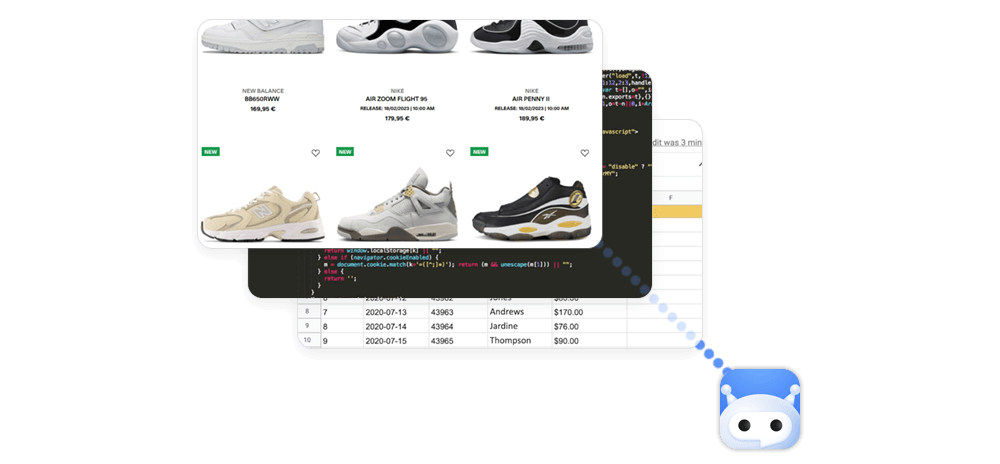

Marketplace Aggregation

Many companies rely on web data from multiple platforms like Amazon, eBay, and Walmart rather than a single source. Shopping bots can collect listings, product details, and seller information across different e-commerce platforms, standardizing product data into a unified format.

This aggregated view supports everything from catalog expansion to competitive benchmarking. Instead of treating each marketplace separately, teams gain a consolidated dataset that reflects the broader market landscape.

Automated Purchasing for High-Demand Items

In more time-sensitive scenarios, shopping bots are used to secure products the moment they become available. This is common in limited releases – such as sneakers, event tickets, or popular consumer electronics – where speed is critical and inventory sells out within seconds.

These bots monitor availability in real time and execute the checkout process instantly when conditions are met. While technically demanding, this approach highlights how automation can extend beyond web scraping into direct action.

Localized Data Collection and Market Analysis

E-commerce platforms often tailor content based on the user’s location, meaning that pricing, availability, and even product visibility can vary significantly across regions. Shopping bots allow teams to access these localized versions of a site without being physically present in each market.

By collecting data from multiple geo-targeted spots simultaneously, businesses can analyze regional trends, identify pricing discrepancies, and better understand how their offerings compare across different audiences.

Deal Monitoring and Alerting Systems

Another practical application is tracking price drops and promotional events. Shopping bots can monitor specific products or e-commerce categories and trigger alerts when predefined conditions are met – for example, when a price falls below a certain threshold or a discount appears.

These price monitoring systems are widely used for both internal decision-making and customer-facing tools, such as deal aggregation platforms or notification services.

Challenges of Running Shopping Bots in the Real World

Building a shopping bot that works in a controlled environment is relatively straightforward, but running it consistently against real-world e-commerce platforms is where things become significantly more complex:

IP Blocking and Rate Limiting

One of the first obstacles shopping bots encounter is IP-based anti-bot systems. When a large number of requests originate from the same address within a short time frame, websites quickly flag this as non-human behavior. The result can range from temporary rate limiting to full IP bans.

At small scales, this might appear as occasional failed requests. At larger scales, it can disrupt entire data pipelines or prevent bots from accessing critical pages. Maintaining a consistent request flow without triggering these limits becomes a balancing act between speed and stability.

Geo-Restricted Content and Pricing Variability

E-commerce platforms frequently tailor content based on a user’s location. Prices, product availability, shipping options, and even search results can vary depending on where the request originates. This creates a challenge for bots that aim to collect accurate, representative data.

Without the ability to access localized versions of a site, the data quickly becomes incomplete or misleading. A bot without geo-targeting might successfully scrape product information, but still miss key regional differences that affect business decisions.

Anti-Bot Systems and Fingerprinting

Beyond simple IP tracking, many platforms use advanced anti-bot systems to identify automated traffic. These CAPTCHA analyze request patterns, headers, browser fingerprints, and behavioral signals to distinguish bots from real users.

Even if performs IP rotation, inconsistencies in how requests are structured or how sessions behave can still reveal automation. As detection methods evolve, bots need to mimic real user behavior more closely, which increases both complexity and resource requirements.

Dynamic Content and JavaScript Rendering

Modern e-commerce sites rarely serve static HTML. Product data is often loaded dynamically through JavaScript, meaning that a simple request may not return all the necessary information. Bots that rely only on raw HTTP requests may miss critical elements such as pricing updates, stock indicators, or personalized recommendations.

To handle this, many setups require a headless browser or rendering engines, which significantly increase computational overhead and slow down data collection. Scaling such systems introduces additional challenges around performance and cost.

Session Management and State Consistency

For bots that interact with multi-step workflows – such as login processes, carts, or checkout – maintaining session continuity is essential. Sessions rely on cookies, tokens, and consistent request environments. If these elements change unexpectedly, sessions can expire or be flagged as suspicious.

At scale, keeping sessions stable across thousands of concurrent interactions becomes increasingly difficult. Small inconsistencies can cascade into failed transactions or incomplete workflows.

Scaling Without Losing Reliability

Perhaps the most underestimated challenge is scaling a shopping bot without degrading its performance. As request volume increases, so does the likelihood of encountering blocks, timeouts, or inconsistent responses. What works at a few hundred requests may break entirely at a few thousand.

This turns scaling into more than just increasing throughput. It requires careful coordination of request distribution, geographic coverage, and success rate monitoring. Without this, higher volume does not translate into better results – it simply amplifies existing weaknesses.

Choosing the Right Proxy Setup for Shopping Bots

Selecting the right proxy type (e.g. residential proxies) has a direct impact on how reliable, scalable, and cost-efficient your shopping bot will be. Different tasks – from large-scale data collection to session-heavy checkout flows – require different network characteristics. The table below compares common proxy options in the context of real-world shopping bot use cases.

| Proxy Type | Best For | Strengths | Limitations | Typical Shopping Bot Use Cases |

|---|---|---|---|---|

| Residential Proxies | High success-rate data collection | Real-user IPs, strong anti-bot evasion, wide geo coverage | Higher cost compared to datacenter proxies | Price monitoring, localized scraping, competitor monitoring |

| Datacenter Proxies (Shared/Dedicated) | High-volume, fast requests | Low latency, cost-efficient, scalable throughput | More likely to be flagged or blocked on strict targets | Bulk product scraping, marketplace aggregation, non-sensitive targets |

| Static ISP Proxies | Session persistence and stability | Residential-grade trust with static IP consistency | Smaller pool size compared to rotating residential IPs | Login flows, cart management, multi-step checkout processes |

| Mobile Proxies | Maximum trust environments | Carrier-grade IPs, highly trusted, difficult to block | Higher cost, slower speeds, limited concurrency | Strict anti-bot targets, sensitive purchase automation flows |

| Residential IPv6 Proxies | Large-scale distributed crawling | Massive IP pool, efficient scaling, lower cost per IP | Limited support on some websites, not always needed for session-heavy tasks | Wide coverage scraping, large catalog monitoring across regions |

In practice, most scalable shopping bot setups don’t rely on a single proxy type. Instead, they combine multiple options – using high-trust IPs for sensitive interactions and high-speed networks for bulk data collection. Matching the proxy setup to the specific task is what ultimately determines how well a bot performs under real-world conditions.

Best Practices for Running Shopping Bots at Scale

Getting a shopping bot to work is one thing. Keeping it reliable as request volume grows, targets change, and defenses evolve is a different challenge altogether. At scale, success depends less on the core logic and more on how well the shopping bots' system adapts to real-world conditions – balancing efficiency, access, and consistency across thousands of interactions.

Treat Success Rate as the Primary Metric

It’s easy to focus on how many requests a bot sends, but volume alone doesn’t translate into useful results. What matters is how many of those requests actually return valid data or complete the intended action. A smaller number of successful requests is often more valuable than a large volume of blocked or incomplete ones.

Monitoring success rates in real time helps identify issues early, whether they stem from IP blocks, parsing errors, or changes in the target site. This shifts the focus from raw throughput to meaningful output.

Match Infrastructure to the Task

Different stages of a shopping bot workflow have different requirements. High-frequency data collection benefits from speed and scale of datacenter proxies, while login flows or checkout processes require stability and trust of mobile proxies or residential proxies. Using the same setup for everything often leads to inefficiencies or failures.

A more effective approach is to align infrastructure with the task at hand – prioritizing reliability where sessions matter and throughput where coverage is the goal. This reduces unnecessary overhead and improves overall performance.

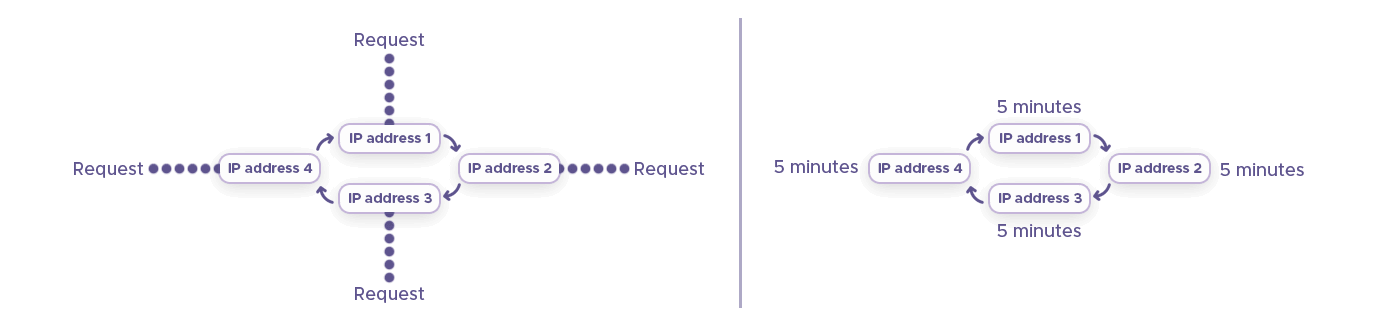

Distribute Requests Intelligently

Scaling isn’t just about sending more requests; it’s about spreading them in a way that mimics natural traffic patterns. Concentrated bursts from a single source are more likely to trigger rate limits or detection systems, even if the total volume is relatively low.

Distributing requests via IP rotation, different locations, and time intervals helps maintain consistency and reduces the risk of sudden disruptions. The goal is to avoid patterns that stand out, rather than simply increasing capacity.

Use Geo-Targeting Strategically

For shopping bots, location often defines the data you receive. Prices, availability, and product visibility can vary significantly between regions, making geo-targeting via mobile proxies an essential part of data accuracy.

Instead of treating location as a secondary parameter, it should be built into the collection strategy. This ensures that the data reflects real user experiences across different markets, rather than a single, potentially misleading perspective.

Monitor and Adapt to Target Changes

E-commerce platforms change frequently – from front-end layouts to backend APIs and anti-bot mechanisms. A setup that works today may start failing tomorrow without any obvious warning.

Continuous monitoring allows teams to detect when something breaks, whether it’s a drop in success rates, missing fields in the data, or suspicious browser fingerprint. Adapting quickly to these changes is what keeps a bot operational over the long term.