- Use a user agents library

- Use residential proxies from safe locations

- Obey robots.txt and terms of use

- Set a native referrer source

- Set a limited number of requests

- Change patterns

- Beware of red flag search operators

- Establish a decent rotation

- Make sure the provider replaces proxies

- Frequently Asked Questions

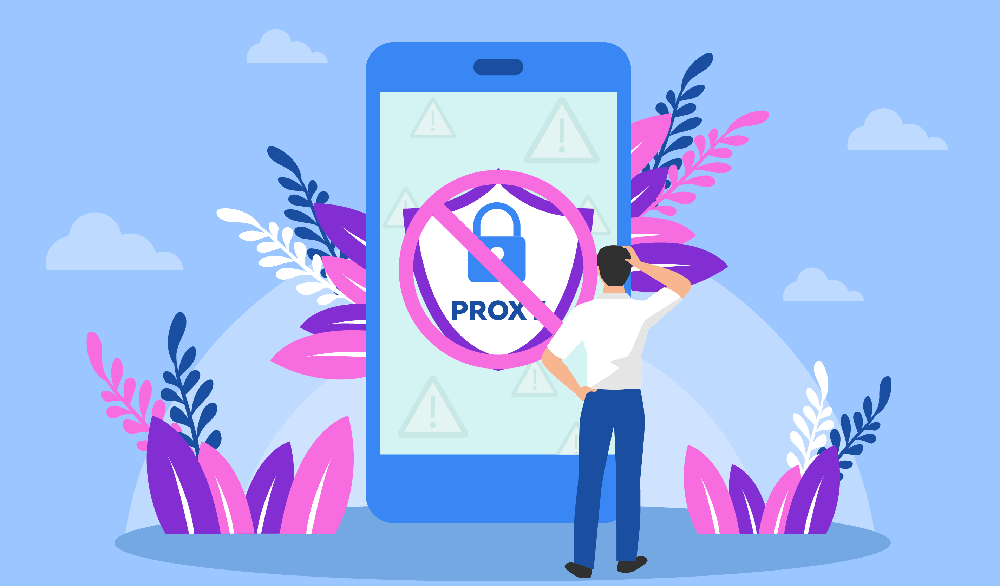

Proxies are vital for successful scraping. However, if you don’t manage them and your scraper properly, the IPs from your pool will constantly get blocked. Blocks slow down the data collection process and quickly drain the proxy pool your provider granted you access to.

These tips will help you smooth out the data gathering and make sure you lose as few IPs as possible during scraping. The actions are very simple, but they will make a significant difference.

1. Use a user agents library

Even if you’re applying a new IP address for each request (e.g. when using proxies for sneaker bots), the same user agent will give you away and get your proxies blocked. The HTTP request header contains quite a lot of information about you and the device you’re using. So it’s a piece of cake for a destination server to tell that something is off if requests from different IPs come with the same user agent.

Moreover, usually, scrapers send an empty header which is even worse. Then the destination server can detect that it’s dealing with a bot right away because real users always have data in their user agents. That’s why you have to configure your proxies and the scraper to send different headers with new requests. It is a widely-used practice, and you will even find user agent libraries on the Internet. You can feed it to your scraper so that it can use various headers.

2. Use residential proxies from safe locations

It’s easy to tell which country the visitor is from by their IP address. To not raise suspicions, it’s better to use proxies from the same place where the destination server is located. If, for example, a German e-commerce store that ships products only within Germany receives traffic from the USA, it is very odd. It can become a red flag for the server and, as a result, this IP address gets banned.

Also, some locations are considered suspicious by default. For instance, many European and American websites won’t allow users from Russia, China, and the Middle East because malefactors often come from these locations.

So avoid using IP addresses from such countries if you’re working with servers located in Europe (e.g. Germany, Netherlands, England, etc.), the States, Australia, or Canada. Obviously, if you’re gathering data from Russian websites, it will be only logical to use Russian IPs. Infatica offers a very wide range of locations, so using our proxies you will never feel limited.

3. Obey robots.txt and terms of use

Every site has its rules that are registered in robots.txt and terms of use. Often, these rules outline which content can be used by visitors and how. Also, robots.txt controls crawlers and the pages they are allowed to access. Of course, you can bypass the restriction and get on restricted pages. But it will most likely get your IP address blocked. Moreover, it’s not ethical to break the rules set by the website.

It’s also useful to go through terms of use and see if website owners have set any specific rules about the content. If you ignore those terms, an owner of the content you’re gathering has the right to sue you for jeopardizing their intellectual property.

4. Set a native referrer source

The referrer is similar to the user agent — it provides the destination server with the information about the user. The difference is that referrers tell the site where the user comes from — the source that contained the link to the page. It can be some social media platform, another website, or a search engine.

The traffic that had no referrer shows up as direct traffic. It can come from a normal user behavior — your request won’t have a source if you type in the URL right into the web address field of your browser. But this kind of behavior is rare, especially if we’re talking about pages with long addresses or ones that involve a random set of symbols.

So empty referrers can become the reason why a destination server blocks your IP. It’s even worse if you set a referrer to be some site that can’t really send so many referred users. Then the bot activity is very easy to spot. That’s why you need to use native referrer sources considering websites you’re working with and your location. For instance, if you’re scraping eBay, you want requests to pages to be referred by eBay.

5. Set a limited number of requests

If your scraper is sending requests at an insanely fast pace, the destination server will detect this activity and block it because most servers are protected from DDoS attacks. And a scraper that sends tons of requests looks like a malefactor who’s trying to put the site down.

Sure, raising the number of requests seems logical if you want to speed up data gathering. But in reality, this will only set you back as, for instance, your sneaker bot proxies will get blocked all the time. Implement a rate limit to make sure your scraper is not sending 10 requests within a second. Also, set breaks between requests — a 2-second delay can save you a lot of proxies.

6. Change patterns

It’s easy to spot a pattern in the behavior of the bot. And servers with advanced anti-scraping measures and protection from attacks can detect repetitive patterns. In this case, you can avoid blocks by setting your scraper to be a bit random — for instance, show some cursor movement, random scrolls, and some clicks. This behavior will look human and natural.

7. Beware of red flag search operators

Google has a whole list of search operators, but some of them can lead to CAPTCHA. One of the good examples of a red flag operator is intitle and inurl parameters as they’re often used for stealing the content.

So if you’re performing bulk searches, it’s better to not use search operators. But if you can’t avoid using them, take all the tips we’ve listed in the article and go extra with them. Set longer delays, implement more random actions, use more IPs, user agents, and so on. This will help you to minimize the risk of facing a CAPTCHA and getting blocked.

8. Establish a decent rotation

This is an obvious tip that, nonetheless, often remains forgotten. Each new request should have another IP address if you’re sending requests to the same server. Otherwise, the site will quickly suspect something and block you. That’s why you need quite a lot of IPs for scraping. Using Infatica you will have access to a very large pool of proxies — we offer over one million residential IP addresses.

9. Make sure the provider replaces proxies

Any rotation pattern will be useless if you have a small pool of IPs, and you get each of them blocked. In this case, your vendor should be able to provide you with more proxies. Infatica offers plans with pools of different sizes so that you can choose the one that fits your needs. Therefore, it’s unlikely that you manage to get the whole pool blocked. However, if you face this issue, we always have new IPs for you. Infatica sources new proxies all the time.

Following these tips, you will keep your data gathering fast and free from blocks. Therefore, you will get to use the same pool of proxies for much longer. These actions are quite effortless, and they might seem insignificant. But if you implement all of them, you will notice the difference.

Frequently Asked Questions

- Open Chrome and click on the three dots in the top right corner.

- Select More tools > Clear browsing data...

- In the Clear browsing data window, make sure that Cookies and other site and plugin data is checked.

- Click on the Clear data button and wait for it to finish processing.

- Reboot your computer and try accessing the website again. If it still doesn't work, you can try using a different browser or changing your DNS settings.