Public web data has become a critical input for modern businesses, from tracking competitor prices to analyzing market trends. Yet understanding how to collect and use that data effectively can be a challenge. In this article, we’ll explain what data scraping is, how it works in practice, the common use cases across industries, and the key challenges teams face when scaling it – along with practical approaches to collecting reliable web data efficiently.

What Is Data Scraping? (And What It’s Not)

At its core, data scraping is the process of automatically collecting publicly available information from websites and turning it into structured, usable data. Instead of manually copying prices, product details, reviews, or listings into a spreadsheet, scraping tools request web pages, read their contents, and extract the specific data points you need – at scale and with consistent formatting.

Most modern websites are built for human visitors, not machines. Their data is presented in visual layouts – tables, cards, buttons, and menus – rather than in clean datasets. Data scraping bridges that gap by interpreting the underlying HTML and converting it into structured outputs such as JSON or CSV. This allows businesses to analyze trends, monitor competitors, or build data-driven products using information that is otherwise scattered across the web.

It’s helpful to distinguish scraping from a few related concepts that are often used interchangeably. Web crawling, for example, refers to the discovery process – systematically navigating through pages and links to find content. Scraping, by contrast, focuses on extracting specific pieces of information from those pages once they’ve been found. APIs sit on the other end of the spectrum: they provide data in a clean, structured format directly from the source, but they’re only available when a website chooses to offer them and typically come with limitations on what you can access.

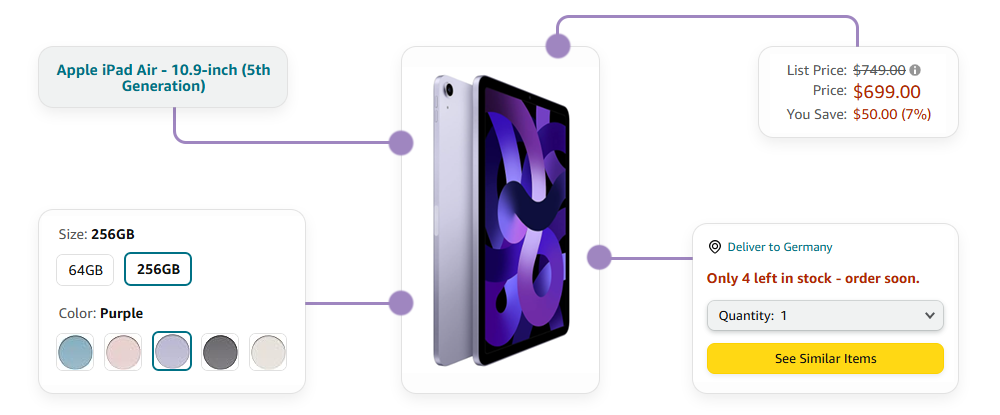

Another common misconception is that scraping simply means copying entire websites. In reality, most business use cases are far more targeted. Teams typically extract a defined set of fields – like product names, prices, availability, or ratings – and normalize that data so it can be compared across multiple sources. This selective, structured approach is what makes scraped data valuable for analytics, automation, and decision-making.

Common Use Cases Across Industries

Web data has become a foundational input for decision-making across many industries, and scraping is often the most practical way to collect it at scale.

Price monitoring and dynamic pricing

E-commerce retailers, marketplaces, and brands track competitor prices across multiple regions and platforms to stay competitive in real time. By continuously collecting product listings, discounts, and stock availability, they can adjust their own pricing strategies, detect undercutting, and respond quickly to market changes.

Market research and trend analysis

Companies analyze product assortments, customer reviews, and category rankings to understand demand shifts and emerging trends. Instead of relying on periodic reports or surveys, scraping enables near real-time insight into what consumers are buying, how they feel about products, and how competitors are positioning themselves.

Lead generation and sales intelligence

Public business directories, company websites, and professional profiles contain valuable data points – such as company size, industry, or contact information – that can be organized into prospecting lists. With structured data in place, teams can segment and prioritize leads far more effectively.

Ad verification and brand protection

Brands and agencies monitor how their ads are displayed across websites, whether they appear in the correct geographies, and whether unauthorized parties are misusing their creative assets. This helps ensure campaign integrity and protects brand reputation.

The travel and hospitality sector

Aggregators collect flight, hotel, and rental listings from multiple providers to compare prices, availability, and conditions in one place. This allows them to build comprehensive comparison platforms and deliver better transparency for end users.

How Data Scraping Works (Step by Step)

Although the idea behind data scraping is straightforward, the process itself follows a structured pipeline that turns web pages into usable datasets.

1. Identifying the target source

This might be a product category page on Amazon, a hotel listing on Booking.com, or a set of search results on Google. At this stage, teams define exactly which fields they want to extract – such as product names, prices, ratings, or availability – and how frequently they need to collect them.

2. Sending the request

A scraping script or tool makes HTTP requests to the target pages, similar to how a browser loads a website. The server responds with the page content, typically in HTML. For simple pages, this raw HTML already contains the data. For more complex sites that rely heavily on JavaScript, an additional rendering step may be needed to load dynamic content before extraction can begin.

3. Parsing HTML and identifying relevant elements

This is where selectors – such as CSS selectors or XPath expressions – are used to pinpoint exactly where the desired data lives within the page structure. The scraper then extracts those values and converts them into a structured format like JSON or CSV.

4. Data cleaning

This step ensures that values from different pages or sources are consistent and comparable. For example, prices might be converted into the same currency, dates standardized into a single format, and duplicate entries removed. Clean data is essential for analysis, reporting, and integration into downstream systems.

5. Data delivery

Finally, the structured dataset is stored or delivered to its destination – whether that’s a database, a data warehouse, or a business intelligence tool. At this point, the scraped data becomes ready for use in analytics, automation, or product features.

Key Challenges of Web Scraping at Scale

Scraping a handful of pages for a quick experiment is relatively straightforward. The real complexity begins when you need to collect large volumes of data reliably, across multiple sources, and on a recurring schedule. At that point, web scraping stops being a simple script and becomes an ongoing infrastructure challenge.

Anti-bot protection

Modern websites actively monitor traffic patterns and block requests that look automated. This can include rate limiting, IP blocking, behavioral analysis, and CAPTCHAs designed to distinguish real users from bots. What works for a few requests often breaks down quickly when scaled to thousands or millions.

Proxy and IP management.

To avoid blocks and access geo-specific content, scraping systems need to route requests through different IP addresses and locations. Managing a reliable pool of residential or datacenter IPs, rotating them intelligently, and keeping them clean from bans is a complex task on its own – especially when you need consistent performance across regions.

Modern, dynamic websites

Many pages no longer return complete data in static HTML. Instead, they load content asynchronously through JavaScript, meaning scrapers must render pages in a headless browser environment before extraction can even begin. This adds significant overhead in terms of computing resources, execution time, and maintenance.

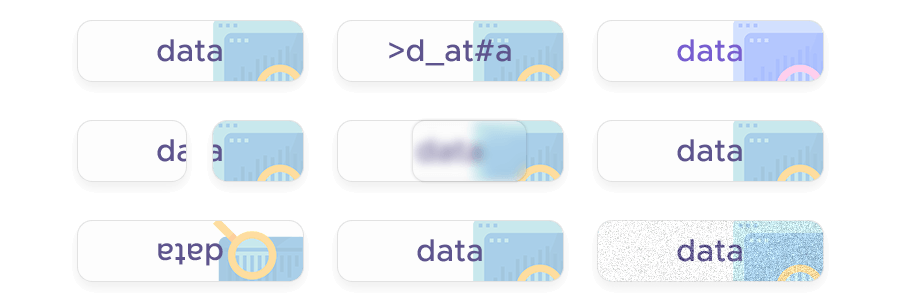

Data consistency

The same product or listing may be formatted differently across websites, currencies may vary, and page structures can change without notice. Scraping systems need to continuously adapt to layout updates and normalize incoming data so that it remains usable and comparable over time.

Operational overhead

Monitoring scraping jobs, handling failures, retrying requests, updating selectors when websites change their structure, and ensuring compliance with legal and ethical standards all require ongoing engineering effort. As the number of targets grows, so does the maintenance burden.

DIY Scraping vs. Scraping APIs vs. Managed Solutions

| Approach | How it works | Advantages | Limitations | Best suited for |

|---|---|---|---|---|

| DIY scraping (in-house scripts) | You build and maintain your own scrapers using tools like Python libraries or browser automation frameworks. | Full control over logic and data flow, flexible customization, low upfront cost. | Breaks easily when sites change, requires proxy management, CAPTCHAs, scaling infrastructure, ongoing maintenance overhead. | Small experiments, prototypes, or teams with strong engineering resources and limited data volume. |

| Scraping APIs | A dedicated API handles requests, rendering, proxy rotation, and anti-bot bypass, returning structured data or raw HTML. | High reliability, scalable infrastructure, reduced maintenance, built-in geo-targeting and JavaScript rendering. | Usage-based cost, less low-level control than fully custom scripts. | Growing teams that need consistent data collection without building infrastructure from scratch. |

| Fully managed scraping solutions | A provider designs, runs, and maintains the entire data pipeline, delivering ready-to-use datasets. | No engineering effort required, highest reliability, tailored data outputs, ongoing support. | Higher cost, less direct control over extraction logic or scheduling. | Enterprise workflows, large-scale data programs, or teams that prefer to fully outsource data collection. |

Introducing Infatica Web Scraper API

As data needs grow, many teams reach a point where maintaining their own scraping infrastructure starts to outweigh the benefits of full control. Keeping up with proxy rotation, handling CAPTCHAs, rendering dynamic pages, and maintaining parsers as websites change can quickly become a continuous engineering task rather than a one-time setup. This is where a Web Scraper API becomes a practical, scalable alternative.

A Web Scraper API acts as an abstraction layer between your application and the target websites. Instead of building and maintaining your own scraping stack, you send a request to the API with the target URL and parameters, and the service handles the rest – routing the request through the appropriate IPs, rendering JavaScript when necessary, and returning clean, structured data or raw HTML ready for parsing.

In practice, this means your team can focus on what to collect and how to use the data, rather than how to reliably access it. Features such as automatic proxy rotation, geo-targeting, CAPTCHA handling, and request retries are built into the service. This significantly reduces operational overhead while improving success rates and data consistency across different regions and sources.

For organizations that rely on timely external data – whether for pricing intelligence, market research, or product features – this approach provides a balance between flexibility and reliability. It offers the scalability of enterprise-grade scraping infrastructure without the burden of building and maintaining it internally.

Ready to simplify your data collection workflow?

Start extracting reliable web data at scale without the infrastructure overhead!