- What Is Data Monitoring? Beyond Dashboards and Alerts

- Common Data Monitoring Use Cases (Especially Across the Web)

- The Challenges of Monitoring Web Data at Scale

- Approaches to Web Data Monitoring – A Comparison

- Using a Web Scraper API to Power Continuous Monitoring and Actionable Insights

- Frequently Asked Questions

Businesses need timely, reliable information to stay competitive. Data monitoring allows organizations to track changes, detect trends, and respond quickly – not just within internal systems, but across public and web-based data sources. Let’s explore what data monitoring is, why it matters, the challenges of monitoring web data at scale, and practical strategies for turning collected data into actionable insights.

What Is Data Monitoring? Beyond Dashboards and Alerts

Data monitoring is often associated with internal systems – tracking application performance, infrastructure metrics, or business KPIs through dashboards and alerts. While these are important, they represent only part of what modern data monitoring involves.

At its core, data monitoring is the continuous process of observing data over time to detect changes, anomalies, and trends that matter to a business. The focus is not on static snapshots, but on how data evolves – what changes, how frequently it changes, and what those changes signal.

In practice, data monitoring extends far beyond internal databases. Many critical business signals exist outside an organization’s own systems, embedded in public or third-party sources. Prices change on competitor websites, product availability fluctuates across marketplaces, search engine results shift daily, and content, policies, or feature sets are updated without notice. Monitoring this external data is often just as important as tracking internal metrics.

Another key distinction is that data monitoring is proactive rather than reactive. Instead of manually checking data when a problem arises, monitoring systems are designed to collect data continuously and surface meaningful changes automatically. This allows teams to respond faster, reduce blind spots, and base decisions on up-to-date information.

Common Data Monitoring Use Cases (Especially Across the Web)

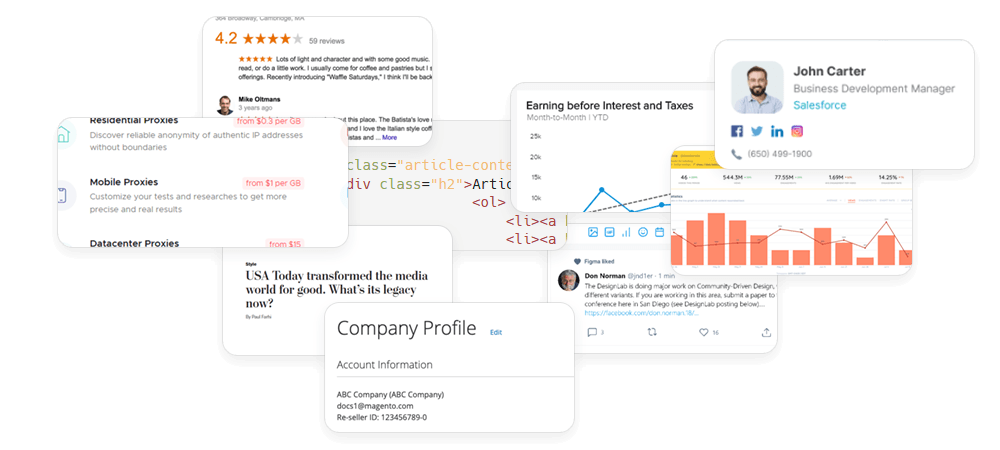

Data monitoring becomes truly valuable when tied to specific, measurable business outcomes. While internal monitoring focuses on operational health, many competitive and market-driven insights depend on tracking data that lives outside your own systems – often on public websites.

Below are some of the most common use cases where continuous external data monitoring plays a critical role.

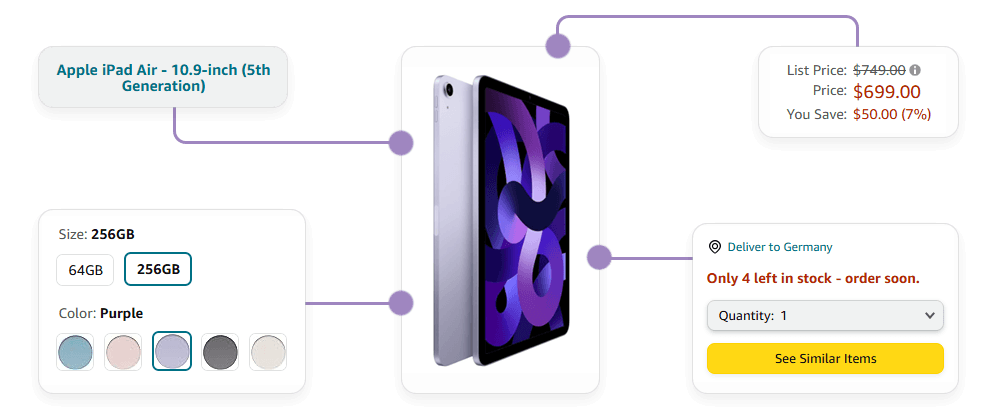

Price Monitoring

In industries like ecommerce, travel, retail, and SaaS, pricing is highly dynamic. Competitors adjust prices frequently based on demand, seasonality, stock levels, and promotions. Monitoring competitor pricing enables businesses to:

- Detect price changes in real time

- Identify discount strategies or flash sales

- Track regional pricing differences

- Inform dynamic repricing models

Because prices can change multiple times per day, this use case requires consistent, repeatable data collection rather than occasional manual checks.

Product Availability and Assortment Tracking

Stock levels, product listings, and assortment changes can reveal shifts in supply chains or strategy. Monitoring product pages helps businesses:

- Detect when items go out of stock

- Identify newly launched products

- Track discontinued items

- Analyze category expansion or contraction

This is particularly valuable for marketplace sellers, distributors, and brands monitoring how their products appear across different platforms.

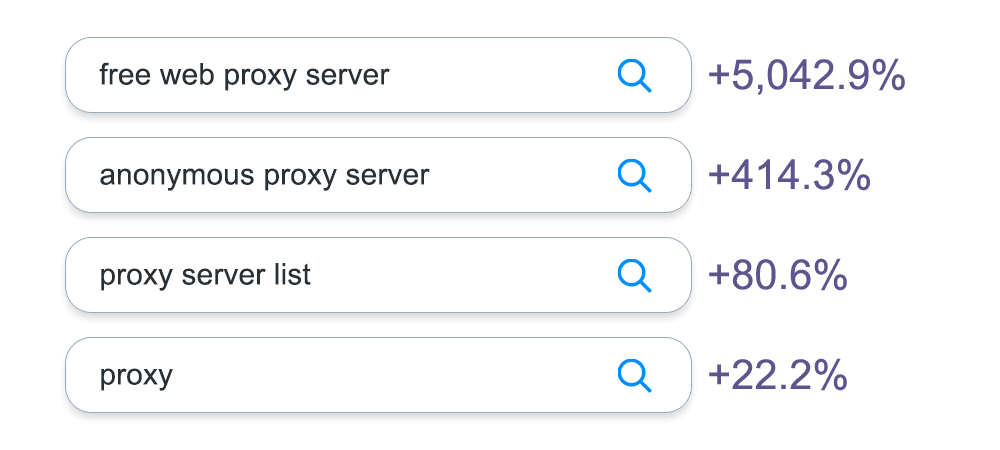

SERP and Search Visibility Monitoring

Search engine results pages (SERPs) are dynamic environments influenced by algorithm updates, competition, and user location. Monitoring search results helps companies:

- Track keyword rankings

- Observe changes in featured snippets or ads

- Analyze competitor visibility

- Monitor localization effects

Since rankings fluctuate frequently, consistent monitoring is essential to understand trends rather than isolated positions.

Competitor Content and Feature Updates

Businesses also monitor how competitors evolve their offerings over time. This includes:

- New feature announcements

- Pricing page updates

- Changes in messaging or positioning

- Policy or terms updates

Tracking these changes allows teams in product, marketing, and compliance to stay informed without manually revisiting dozens of websites.

Compliance and Regulatory Monitoring

In regulated industries, monitoring external websites for policy updates, regulatory notices, or legal changes is critical. Organizations may need to track:

- Updates to government portals

- Changes in public registries

- Modifications in platform terms of service

- Industry guideline revisions

Automated monitoring ensures important updates are identified quickly, reducing compliance risk.

The Challenges of Monitoring Web Data at Scale

Monitoring internal systems typically means working with structured databases and predictable infrastructure. Monitoring public web data is different. The data may be accessible, but the environment in which it exists is dynamic, distributed, and outside your control.

As monitoring frequency increases and coverage expands, several practical challenges emerge.

Website Structure Changes

Websites regularly update their layouts, HTML structures, and frontend frameworks. Even minor design changes can break data extraction logic. A monitoring workflow that works today may fail tomorrow if the underlying page structure changes.

For teams building their own monitoring systems, this often means ongoing maintenance and rapid adjustments to parsing logic.

Anti-Bot and Traffic Filtering Mechanisms

Many websites implement traffic management systems to protect against abusive behavior and infrastructure strain. These systems may include rate limiting, IP-based access restrictions, and CAPTCHA challenges.

For legitimate data monitoring use cases, these mechanisms can still interrupt data collection if requests are too frequent or originate from a limited number of IP addresses.

Frequency vs. Cost Trade-Offs

Effective monitoring depends on timeliness. Price changes, ranking shifts, or stock updates may occur multiple times per day. However, increasing collection frequency also increases infrastructure load and proxy or networking requirements.

Geographic and Contextual Variability

Web content can differ depending on user location, device type, language settings, and logged-in status. For businesses tracking localized pricing, regional search results, or market-specific product availability, monitoring must account for these contextual variations. Collecting data from a single location may not provide an accurate view.

Data Consistency Over Time

Monitoring is not just about capturing data – it’s about comparing it over time. This requires consistent formatting, stable identifiers, and normalized outputs – without standardized collection processes, historical comparisons can become unreliable or difficult to interpret.

Approaches to Web Data Monitoring – A Comparison

| Criteria | Manual Monitoring | In-House Scrapers | Web Scraper API |

|---|---|---|---|

| Setup Complexity | None | High – requires engineering time to build and maintain | Low – integration via API |

| Scalability | Very limited | Moderate to high (depends on infrastructure) | High – designed for large-scale data collection |

| Maintenance Effort | Ongoing manual checks | Continuous updates required for site changes | Handled by API provider |

| Handling Website Changes | Manual re-checking | Requires developer intervention | Abstracted and managed externally |

| IP & Traffic Management | Not applicable | Must manage proxies, rotation, and request strategy | Built-in traffic handling and IP management |

| Reliability Over Time | Low | Variable (depends on engineering resources) | High – optimized for consistent access |

| Monitoring Frequency | Infrequent | Configurable, but resource-intensive at high frequency | Designed for scheduled and repeated requests |

| Time to Value | Immediate but limited | Weeks to months | Immediate integration, fast deployment |

| Best For | Small-scale checks | Teams with strong scraping infrastructure expertise | Businesses focused on data outcomes rather than infrastructure |

Using a Web Scraper API to Power Continuous Monitoring and Actionable Insights

Once the challenges and operational trade-offs of web data monitoring are clear, the question becomes: how can teams reliably monitor external data at scale and transform it into business value? This is where a Web Scraper API can play a central role in a continuous data monitoring workflow.

Automating Continuous Data Collection

A Web Scraper API enables teams to collect data from multiple sources consistently and repeatedly, without manual intervention. This allows organizations to:

- Monitor hundreds or thousands of pages simultaneously

- Handle varying page structures and dynamic content without constant engineering adjustments

Handling Infrastructure and Access Challenges

One of the main operational hurdles in web monitoring is managing the underlying infrastructure. With a Web Scraper API, teams gain:

- Built-in IP rotation to avoid temporary bans

- Traffic management to respect site limits

- Error handling and retry logic for failed requests

By abstracting these complexities, teams can focus on what data to monitor rather than how to access it.

Transforming Data Into Actionable Insights

Collecting data is only the first step. A robust monitoring workflow turns this data into actionable insights. With consistent, structured outputs from an API, organizations can:

- Detect meaningful changes quickly (price shifts, stock updates, policy changes)

- Feed monitoring results into analytics or BI tools for trend analysis

- Automate decisions based on detected changes, such as dynamic repricing or alert notifications

- Maintain historical datasets to identify patterns and inform strategic decisions

This ensures that data monitoring becomes a driver of business outcomes, rather than just a passive collection process.

Ready to Monitor Data Smarter?

Reliable, continuous data monitoring starts with consistent access to the right information. A Web Scraper API simplifies collection, handles infrastructure challenges, and delivers structured outputs – letting your team focus on insights, not maintenance.

Start building a resilient monitoring workflow today and turn external data into actionable business decisions.