- What Are Residential Proxies?

- How Do Residential Proxies Work?

- Are Residential Proxies Legal?

- Choosing a Residential Proxy Provider

- What Are Residential Proxies For?

- How Proxy Providers Acquire Residential IPs

- Different Types of Residential Proxies

- Pros and Cons of Using Residential Proxies

- Frequently Asked Questions

If you want to learn more about residential proxies, you have come to the right place. In this article, you will learn what residential proxies are, how they work, their types, online privacy, and anonymity, as well as how to choose the best legal residential proxy servers provider for your needs.

What Are Residential Proxies?

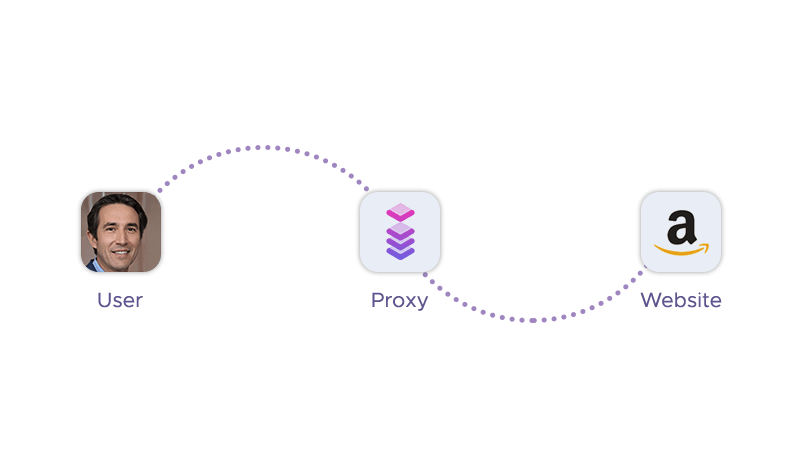

A residential proxy is a type of proxy that uses a residential IP address to access the internet. A residential IP address is an IP address that is assigned by an internet service provider (ISP) to a home user. Unlike datacenter proxies, which are used by servers and websites, residential IP addresses are used by real people and devices. Therefore, this proxy type is more trustworthy and less likely to be detected or blocked by websites.

How Do Residential Proxies Work?

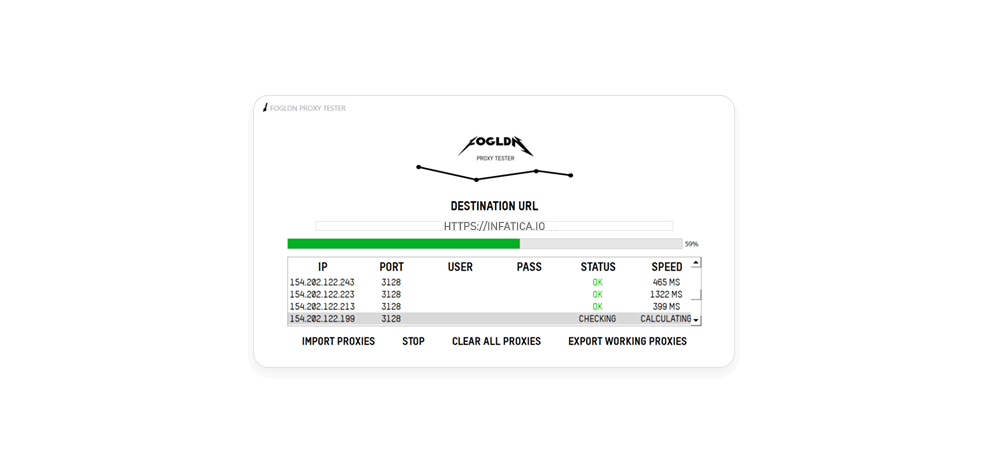

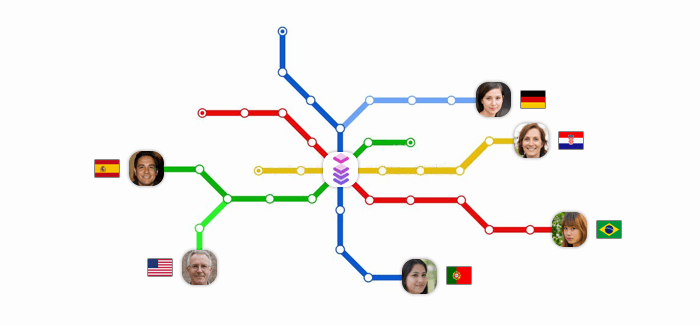

Residential proxies are, at their core, intermediary servers – they work by routing traffic through a device that belongs to a real user and has a residential IP address. The device acts as an intermediary server between you and the target website, concealing your real IP address and location. The steps involved in using a residential proxy are:

- You choose a residential proxy provider and sign up for a plan that suits your needs. You can select the country, city, or ISP of the residential IP address you want to use.

- You establish an internet connection to the residential proxy network using a proxy application, a browser extension, or proxy management software. The proxy provider will assign an IP address for you from its pool of available devices, making target servers unable to detect the origin of your request.

- You send a request to the website you want to visit through the proxy application. The proxy application will forward your request to the device that has the residential IP address you are using.

- The device will receive your request and send it to the website on your behalf. The website will see the device's residential IP address and location as the source of the request, not yours.

- The website will send back a response to the device, which will then forward it to the proxy application. The proxy application will relay the response to you, allowing you to anonymously access the website's content and data.

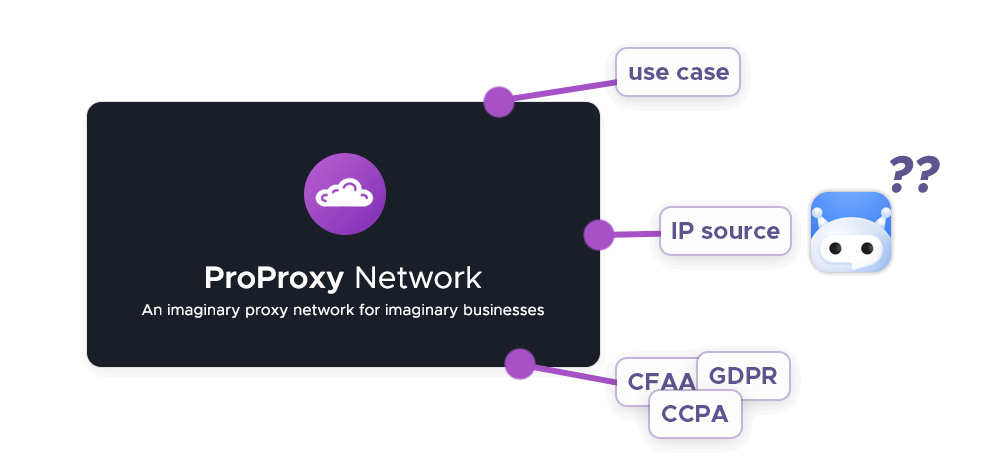

Are residential proxies legal?

The answer is not so straightforward – and depends on several factors, such as the source of the residential IP address, the purpose of using the residential proxy, and the laws and regulations of the jurisdiction where the proxy is being used.

The source of the residential IP address is important because it determines whether the proxy provider has the consent of the device owner to use their IP address. Some proxy providers obtain alternative IP addresses from legitimate sources, such as users who voluntarily share their bandwidth in exchange for a service or a reward. Other proxy providers may use malicious methods, such as hacking, malware, or botnets, to hijack the IP addresses of unsuspecting users.

The purpose of using the residential proxy is also important because it determines whether the proxy user is violating the terms of service of the website they are accessing. Some websites may prohibit the use of proxies in their policies, as they may consider them as a form of fraud, spam, or abuse. For example, some websites may ban the use of proxies for web scraping, market research, or ad verification, as they may view them as a threat to their business or privacy. Other websites may allow the use of proxies for legitimate purposes, such as online anonymity, security, or accessing geo-restricted content. The proxy user should always check the website's policies before using a residential proxy.

The laws and regulations of the jurisdiction where the proxy is being used are also important because they determine whether the proxy user is breaking any laws or regulations. Different countries and regions may have different laws and regulations regarding the use of proxies, especially when it comes to data protection, privacy, and intellectual property rights. For example, some countries may have strict laws that require the proxy user to obtain the consent of the data subject before collecting or processing their personal data. Other countries may have lax laws that do not require such consent.

Choosing a Residential Proxy Provider

If you are looking for a reliable, ethical, and high-performance proxy provider, you should consider Infatica. Infatica is a proxy provider that offers various types of residential proxies for purposes like web scraping, market research, ad verification, and geo-unblocking. Here are some of the key features and benefits that Infatica offers:

Infatica is an ethical proxy provider. Infatica obtains its residential IP addresses from legitimate sources, such as users who voluntarily share their bandwidth in exchange for a service or a reward. Infatica does not use any malicious methods, such as hacking, malware, or botnets, to hijack the IP addresses of unsuspecting users.

Infatica offers 99.9% uptime and maximum performance. Infatica has a large and diverse pool of IP addresses with geo-targeting across 150 countries, ensuring that you always have access to the proxies you need. Infatica’s network is also characterized by speed, compatibility, and stability, ensuring that you always have a smooth and seamless browsing experience. Infatica monitors and optimizes its network constantly, ensuring that you always get the best performance, speed, and support for your budget.

Infatica provides web scraping tools. Infatica offers a web scraping platform that allows you to easily and efficiently scrape data from any website. You can use Infatica's web scraping tools to create, manage, and run your web scraping projects, without any coding or technical skills. You can also export your scraped data, without any hassle or limitations.

What are residential proxies for?

Here are some brief explanations and real-world examples for the use cases of this proxy type:

Web Scraping

This is the process of extracting data from websites for various purposes, such as analysis, research, or marketing. Proxies can help web scrapers to avoid detection and blocking by websites, as they can mimic the behavior of real users and access the website from different locations and devices. For example, a web scraper can use residential proxies to scrape product reviews, prices, or availability from an online store, without being banned or throttled by the website.

Social Media Management

This is the process of creating, publishing, and monitoring content on social media platforms, such as Facebook, Twitter, or Instagram. A residential proxy platform can help social media managers to manage multiple accounts, access geo-restricted content, and avoid account suspension or verification. For example, a social media manager can use proxies to post content, interact with followers, or conduct market research on different social media platforms, without revealing their real IP address or location.

Sneaker bots

Sneaker bots are software applications that automate the process of buying limited-edition sneakers from online retailers, such as Nike, Adidas, or Supreme. Residential proxies can help sneaker bots to increase their chances of securing the desired sneakers, as they can bypass the anti-bot measures and captchas of the websites and make multiple requests from different IP addresses and locations. For example, a sneaker bot can use them to buy multiple pairs of sneakers from different online stores, without being detected or blocked by the websites.

SEO Monitoring

This is the process of tracking and analyzing the performance and ranking of a website on search engines, such as Google, Bing, or Yahoo. Proxies can help SEO monitors to obtain accurate and unbiased data, as they can access the search engines from different locations and devices and avoid the personalization and localization of the search results. For example, an SEO monitor can use them to check the ranking, web traffic, or keywords of a website on different search engines, without being influenced by their own IP address or location.

Private browsing

This is the process of surfing the internet anonymously and securely, without leaving any traces or exposing any personal information. Residential IP addresses can help private browsers to protect their privacy and security, as they can hide their real IP address and location, and encrypt their internet traffic. For example, a private browser can use them to access sensitive or restricted websites, such as online banking, gambling, or adult content, without being tracked or monitored by their internet service providers, government, or hackers.

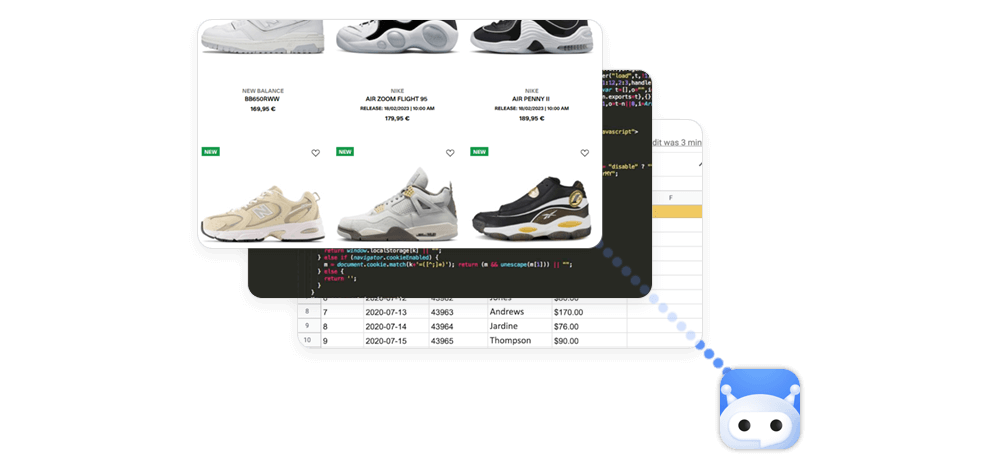

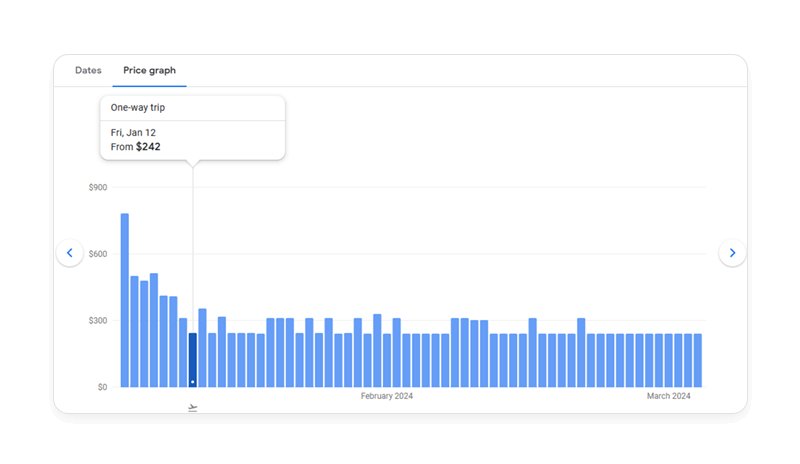

Market price monitoring

This is the process of collecting and comparing the prices of products or services from different online sources, such as competitors, suppliers, or customers. Proxies can help price monitors to obtain reliable and up-to-date data, as they can access the online sources from different locations and devices, and avoid the dynamic pricing and geo-targeting of the websites. For instance, a price monitor can use them to monitor the prices of flights, hotels, or car rentals from different online travel agencies, without being affected by their own IP address or location.

Market Research

This is the process of gathering and analyzing information about the market, such as the needs, preferences, or behavior of the customers, competitors, or industry. Residential proxies can help market researchers to collect valid and diverse data, as they can access the market from different locations and devices, and avoid the bias and distortion of the data. For example, a market researcher can use them to produce sales intelligence software, conduct surveys, interviews, or focus groups with different customers, competitors, or industry experts, without revealing their real IP address or location.

eCommerce

eCommerce involves buying and selling goods or services online, such as on Amazon, eBay, or Shopify. Residential IP addresses can help eCommerce users to improve their online shopping experience, as they can access the online stores from different locations and devices, and enjoy the benefits of the local market. For example, an eCommerce user can use them to buy or sell goods or services from different countries or regions, without being limited by the geo-restrictions, currency conversions, or shipping fees of the websites.

App and Website Testing

This is the process of checking and evaluating the functionality, usability, and performance of an app or a website, such as on Android, iOS, or Chrome. Residential proxy services can help app and website testers to test their app or website from different perspectives, as they can access the app or website from different locations and devices, and simulate the real user experience. For example, an app or website tester can use residential proxies to test the loading speed, layout, or features of their app or website on different browsers, operating systems, or screen resolutions, without being restricted by their own IP address or location.

Ad Verification

This process involves verifying and validating the delivery and quality of online advertisements, such as on Google Ads, Facebook Ads, or YouTube Ads. A residential proxy network can help ad verifiers to monitor and optimize their online advertising campaigns, as they can access the online platforms from different locations and devices, and avoid the fraud and manipulation of the ads. For example, an ad verifier can use it to check the visibility, placement, or performance of their ads on different websites, apps, or videos, without being deceived or blocked by the websites, competitors, or bots.

Accessing local content

This is the process of accessing online content that is only available or relevant in a specific location, such as on Netflix, Hulu, or BBC iPlayer. A residential proxy service can help online content users to access local content, as they can access the online platforms from different locations and devices and bypass the geo-restrictions and censorship of the websites. For example, an online content user can use it to watch movies, shows, or sports that are only available or popular in a certain country or region, without being limited by their own IP address or location.

How Proxy Providers Acquire Residential IPs

There are legitimate and illegitimate methods of obtaining residential IP addresses, which have different implications for the proxy provider, the proxy user, and the device owner.

SDKs

Software development kits allow developers to integrate certain features or functions into their applications. Some residential proxy providers offer SDKs that allow app developers to monetize their apps by sharing the residential IP addresses of their app users with the proxy network. The app users can opt-in or opt-out of the proxy network, and receive a service or a reward in return for sharing their bandwidth. For example, Infatica offers an SDK that allows app developers to earn money by sharing real IP addresses of their app users with the Infatica proxy network.

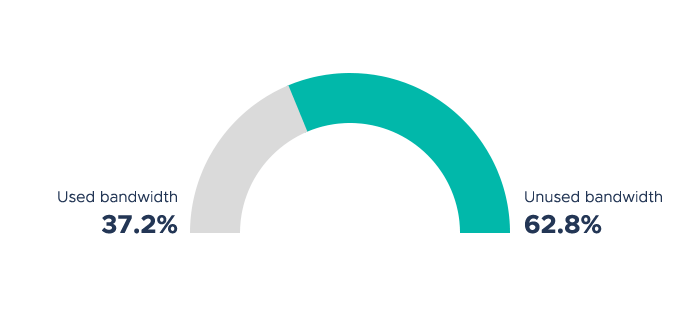

Buying unused bandwidth

This method involves buying the excess bandwidth of residential IP addresses from ISPs or device owners. Some proxy providers partner with ISPs or device owners to buy their unused bandwidth and use it for their proxy network. The ISPs or device owners can earn money by selling their unused bandwidth, and the proxy users can access the residential IP addresses of the ISPs or device owners.

Leasing IP spaces

Residential IP addresses from ISPs or device owners can be leased to the proxy network. Some proxy service providers rent the IP address spaces of residential IP addresses from ISPs or device owners and use them for their proxy network. The ISPs or device owners can make money by renting their IP address spaces, and the proxy users can access the residential IP addresses of the ISPs or device owners.

Unfortunately, there are malicious means of sourcing IPs, too:

Turning users' devices into proxies without consent

This method involves hijacking the residential IP addresses of unsuspecting users and using them for the proxy network. Some proxy providers use malicious methods, such as hacking, malware, or botnets, to infect the devices of unsuspecting users and turn them into proxies without their knowledge or permission. The proxy providers can access the residential IP addresses of the infected devices, and the proxy users can use them for their purposes.

This method exposes the device owners to various risks, such as identity theft, data breach, or legal liability. For example, in 2017, Hola VPN service that turns its users' devices into proxies without their consent and sells their bandwidth to the residential proxy provider Luminati (now Bright Data).

Using stolen or fake credentials

Using stolen or fake credentials is a method that involves using the residential IP addresses of legitimate users by impersonating them or stealing their credentials. Some proxy providers use fraudulent methods, such as phishing, spoofing, or cracking, to obtain the login details or personal information of legitimate users and use them to access their residential IP addresses. The proxy providers can use the residential IP addresses of the legitimate users, and the proxy users can use them for their purposes.

This method violates the privacy and security of the legitimate users and may result in account suspension or legal action. For example, some proxy providers use stolen or fake credentials to access the residential IP addresses of Netflix or Hulu users.

Different Types of Residential Proxies

There are different types of proxies – each with different advantages and disadvantages regarding security, cost, speed, reliability, complexity of setup, and resistance to blocking.

Shared

Shared residential proxies use IP addresses that are shared between multiple users simultaneously. They are among the cheapest in the market, but they may have lower performance and higher risk of being blocked or misused by other users. They are suitable for tasks that do not require high speed, security, or exclusivity, such as web scraping, geo-unblocking, or managing multiple social media accounts.

Dedicated

Dedicated residential proxies use IP addresses that are reserved for a single user only. They are among the most expensive in the market, but they offer the highest level of performance, security, and privacy. They are ideal for tasks that require high speed, security, or exclusivity, such as SEO monitoring, banking, or sneaker bots. Depending on the provider, they may also provide the user with a new IP address after a certain period.

Rotating

Rotating residential proxies use residential IP addresses that change after a certain time or number of requests. They are usually priced based on traffic, rather than IP. They offer a high level of anonymity and diversity, as they can access websites from different locations and devices. They are suitable for tasks that require high rotation, such as web scraping, ad verification, or market research. A rotating residential proxy can also be customized to fit the user's needs, such as the rotation frequency, the country, or the ISP of the IP.

Pros and Cons of Using Residential Proxies

Here are main benefits of that come with this proxy server type:

- High anonymity: Their IP addresses are almost indistinguishable from regular users. They can hide your real IP and location, and make it seem like you access content from a different device. This can help you to avoid online tracking, censorship, or geo-restrictions.

- Low risk of being blocked: Residential IP addresses are trusted and verified by ISPs. They can bypass the anti-bot measures and captchas of the websites, and make multiple requests without being banned or throttled. This can help you to access content and data that are otherwise unavailable or limited.

- Large and diverse pool of IP addresses: You get access to a large and diverse pool of IP addresses from over 140 countries and regions. This way, you can choose the country, city, or ISP of the residential IP address you want to use, and enjoy the benefits of the local market. You can also change the IP address after a certain time or number of requests, and access websites from different locations and devices.

However, you need to keep in mind that there are some downsides, too:

- Higher cost: This proxy type is among the most expensive kinds of proxies in the market, as it uses residential IP addresses that are scarce and valuable. Residential proxy servers are usually priced based on traffic, rather than IP. You may have to pay a high fee to access the residential proxy network, and a higher fee to access more features (e.g. network protection) and benefits. You may also have to pay extra for dedicated or rotating residential proxies, which offer more performance and security.

- Performance and speed reduction: They may have lower speed and performance than other types of proxies, as they use residential IP addresses that are shared between multiple users and devices. They may have lower bandwidth, higher complexity, higher latency, or higher error rate than datacenter proxies, which use dedicated servers and websites. They may also have lower stability and reliability than mobile proxies, which use mobile IP addresses that are connected to cellular networks.

Final Words

We hope you enjoyed this article and learned something new about residential proxies. You should always do your research and exercise caution before using a proxy – consider Infatica as your proxy provider to make your online activities more efficient as they offer ethical, high-performance, and web scraping-friendly proxy networks.