Even though you can see quite a lot of specialists advising to use user agents for scraping, it’s not a very common practice. Yet, such a simple addition as a user agent (abbreviated to UA) can make a huge difference by automating and streamlining data gathering. So if you never used such a tool, here is your sign that you should try it.

In this article, we're taking a closer look at user agents for scraping: We'll define what they do, see their examples, and understand their importance.

What are user agents?

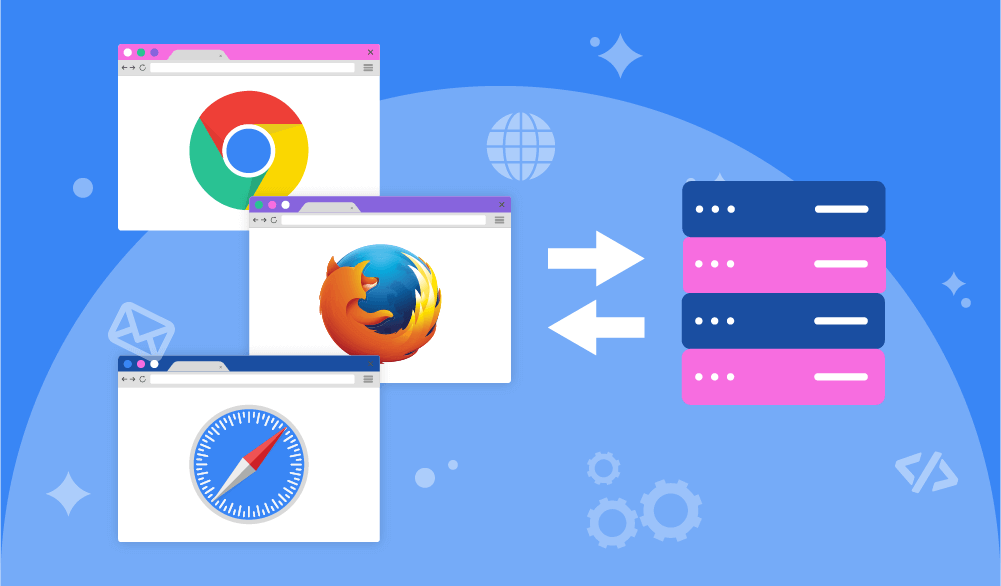

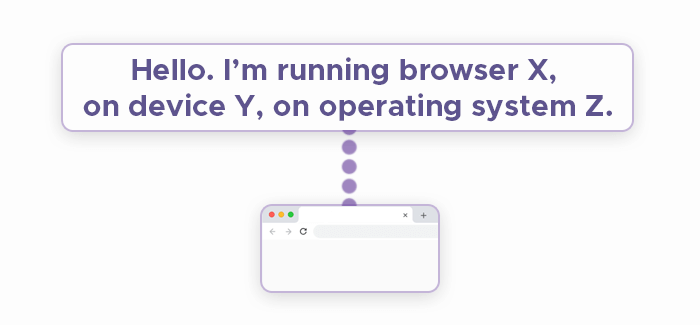

A user agent is an identifier that the destination server uses to understand which browser, operating system, and device the given visitor is using.

Mozilla's developer portal provides a helpful overview of what kind of information user agents typically contain:

User-Agent: Mozilla/5.0 (<system-information>) <platform> (<platform-details>) <extensions>Here's what an iPhone user agent looks like:

Mozilla/5.0 (iPhone; CPU iPhone OS 12_2 like Mac OS X) AppleWebKit/605.1.15 (KHTML, like Gecko) Mobile/15E148If you look at a UA, you will see just a text string that contains all the necessary information. The client sends this data through web scraping headers of a request every time a connection with the destination server is established. Then, the server will prepare a response that is suitable for a specific combination of a browser, operating system, and device.

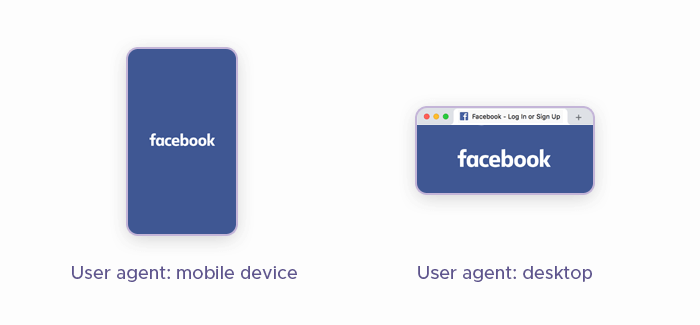

Here is an example of how it works: When you pop on Facebook using your laptop, you will be presented with a desktop version of this website. Try using a browser on your smartphone for this — and you’ll see a mobile version. A server understands which version to show thanks to a user agent it receives.

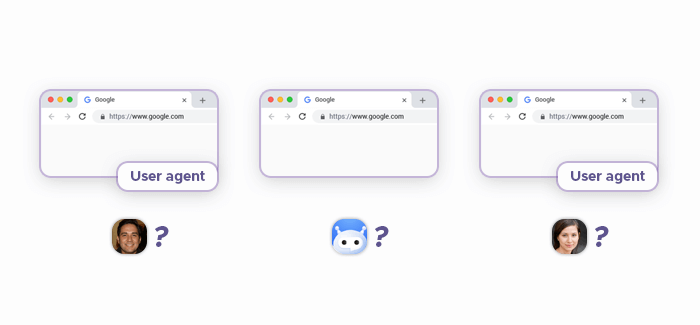

Since a user agent is just a string of text, it’s not difficult to change it and trick the destination server. That’s why it’s useful to add user agents to the web scraping process — to make servers believe they’re being visited by different users from different devices.

Why is it important to use user agents?

As we’ve mentioned, user agents for web scraping aren't that common. But it would be smarter to add this tool to your array of scraping instruments, especially considering how advanced anti-scraping technologies have become. If even a couple of years ago we could neglect user agents and have a rather smooth data gathering process using only a scraper and proxies, today the lack of a user agent library will most likely make us face constant bans.

A web scraper by default sends requests without a user agent, and that’s very suspicious for servers. They can instantly understand they’re dealing with a bot if a request doesn’t provide data about a user. So it’s much better to add this extra step and start using a library of user agents if you want to gather data efficiently.

How to achieve the best results with user agents?

Using this tool won’t give you the smooth process you desire if you just apply user agents without analyzing its strong and weak points. Here are some tips that will help you get the most out of them.

Opt for popular user agents

You can find different libraries of user agents, and it’s better to choose popular ones. Servers become very suspicious of UAs that don’t belong to major browsers, and most likely, they will block such requests. Also, stick to user agents that match the browser you’re using for scraping to make them match the default behavior of this browser.

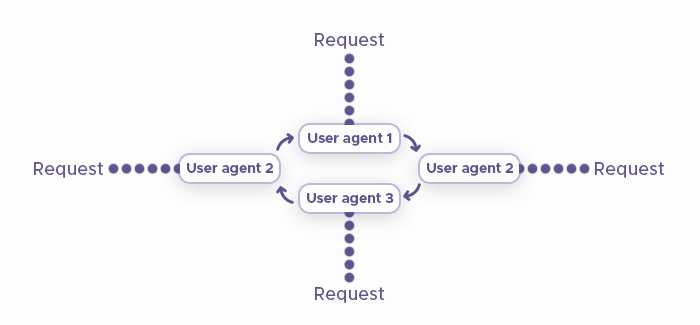

Rotate both proxies and user agents

It’s important to rotate proxies during web scraping to change IP addresses and make a destination server believe that requests are sent from different users. The same rule works for user agents. If you just stick to the same UA for several requests, you will inevitably get blocked.

Rotate user agents with each request just like you do it with proxies to achieve convincing requests that won’t make a destination server suspect it’s dealing with a bot. Usually, rotation of web scraping user agents is achieved viaPython and Selenium, and you will find numerous detailed guides online that will help you master this tool.

The bottom line

No matter how advanced a scraper is and how well it can deal with CAPTCHAs, you still need to improve it with proxies and user agent libraries. Both tools require rotation that will assign a new proxy and UA to each request. Once you have the rotation automated, you will achieve the smoothest data gathering process.

Frequently Asked Questions

User-Agent: android:com.example.myredditapp:v1.2.3 (by /u/kemitche).

Mozilla/5.0 (X11; Ubuntu; Linux x86_64; rv:35.0) Gecko/20100101 Firefox/35.0. Looking at it, we see the product name and version (Mozilla/5.0), layout engine and version (Gecko/20100101), as well as technical details like platform.

headers = {'Mozilla/5.0 (iPad; U; CPU OS 3_2_1 like Mac OS X; en-us) AppleWebKit/531.21.10 (KHTML, like Gecko) Mobile/7B405'}