Data as a Service (DaaS) is a relatively new data distribution model when the end customer passes the information collection, management, and storage tasks to a dedicated service provider.

Today we will talk about the pros and contras of this model, current technical issues, and how they could be solved by using a proxy technology.

Why to use DaaS

It is better to understand the importance of data for business by looking at some numbers. According to statistics, the number of search queries, including "near me" phrase, has increased by 900%. This shows the rising demand for personalization. And to provide really personalized services, you need to somehow get the user's data, or he or she will remain just a "visitor." This is not an easy task.

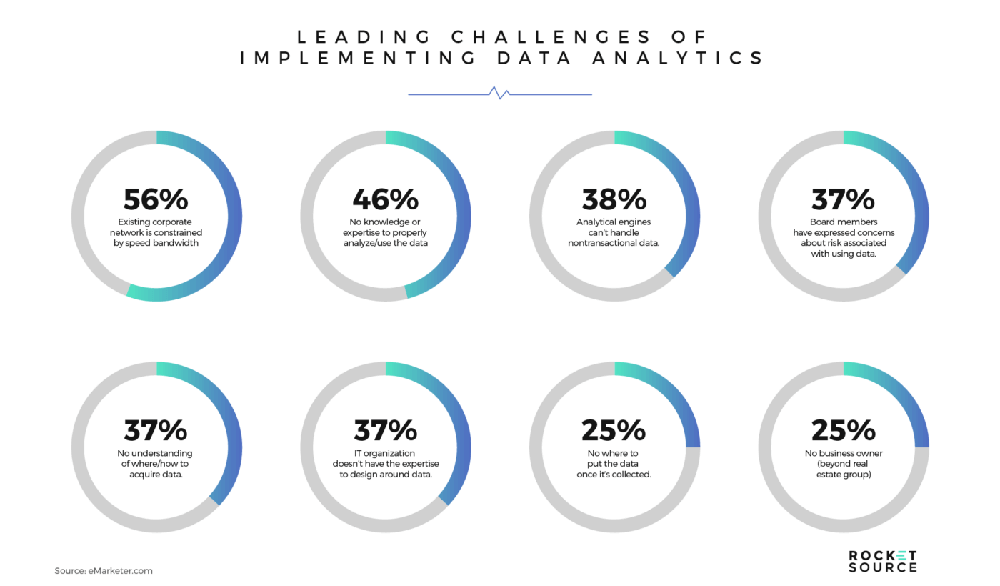

According to multiple research publications, the number of big data-related problems includes:

- The lack of knowledge and data management skills (46% cases),

- not sufficient technical capabilities (56%),

- analytical systems can't handle significant volumes of nontransactional data (38%),

- lack of understanding of the practical ability to put the data after it is collected (25%).

DaaS providers allow businesses to solve all these problems by giving them ready-to-use and actionable data sets, collected using the predefined requirements. Usually, the information is tailored to a specific industry and contains answers to particular business challenges. Such data sets should be easy-to-interpret and use in a decision-making process.

Looks excellent – companies that have vast experience and the infrastructure for data collection and management will do the job for you. The only thing to do is to interpret the data set and make a business decision. But not so fast. DaaS tools have their own problems, and the biggest one is their ability to collect correct data in any situation. Let's dive deeper into the issue.

The main DaaS problem

How exactly DaaS companies collect data? They have a dedicated infrastructure and data collection scripts that allow them to download data from websites and search engines. Such scripts are called crawlers or scrapers.

For example, if the specific b2b customer needs the information to use in SEO, it is a good idea to download and analyze data on their competitor's websites first. Studying their used keywords, search engines rankings, and other things will be very useful to know before you start the real search engine optimization. To get such data, the scraping bot visits needed websites and "scrapes" specified information.

Here it might turn out that the owners of these websites and search engines are not happy about someone trying to scrape their website. In most situations, they will try to ban your bot. Usually, crawlers use so-called datacenter IP addresses without proper rotation. So, it is straightforward to identify and block them. There are a lot of anti-bot systems out there nowadays.

If your scraping bot gets blocked, it is terrible, but the situation can turn out even worse if the website owners decide to feed your bot with fake data instead of mere blocking. As a result, you will end up with a data set with the wrong data. If you then use it for decision-making, the best you can expect is zero effect of these decisions, and in the worst case, you will lose a lot of money.

The solution: residential proxies

The main DaaS problem can be solved by using residential proxies for scraping. Unlike datacenter IPs that are assigned by hosting providers, residential proxies use IP addresses assigned to regular users (homeowners) by their ISP. It is very easy to reveal and block datacenter IPs using an ASN number; it is not that easy task when we use residential proxies.

There are a lot of tools for automated ASN analysis. Many of them are integrated with anti-bot systems for faster scraping bots detection, blocking, or tricking with fake data. However, residential addresses are assigned to regular users (homeowners) by their ISPs. This means that requests sent by a scraping bot will look exactly like the one generated by a regular website visitor. Nobody will block potential customers, and the anti-bot system can be easily bypassed.

Infatica provides such a residential proxy solution. By using our rotating residential proxies, you can be sure that the quality of the collected data will always be excellent. Your scraping bots will never get blocked!

More articles on how residential proxies are useful for business